Hello, everyone!

As promised, this series of posts about work will begin with a rather interesting topic from a narrative perspective: information security in the modern world, where anyone can write and launch their own application, sometimes even successfully, but ...

"We've got a security hole!!" - "Well, at least something's secure around here ..."

An old joke that will never lose its relevance, especially now

What we have now is a multitude of different websites and applications written by AI for different tasks and purposes. It's one thing if you write an application about yourself and for yourself, like a book. And there's no problem with that until such an application becomes public or, more interestingly, is created specifically to attract users

I'm not saying that AI is a really crappy tool for writing code. It's a great tool that helps you a lot in your work. But only when you understand what you're doing. And that's where we enter the brave new world of buggy software

And here I won't even mention the mistakes we make in server configuration. What's more, in defense of this, I can say that most modern tools for creating applications out of the box are quite good at closing .env or using services with managed databases, which are generally well protected in themselves

The most interesting story begins when we start using this code and these services on our own infrastructure. This is where our adventure into the abyss begins

B for Backup

Next, I will analyze the main errors that I, as a security analyst, most often find in these applications:

Server configuration errors take the well-deserved first place in our list. You run nmap and see everything that is running on the service published externally. Databases, open ports for mail, services, everything is publicly available, just reach out and grab it. And it's good if there is at least some authorization on the service. Or request limits, or... Okay, fuck it - in 90 percent of cases, it's all just sticking out there with its ass exposed

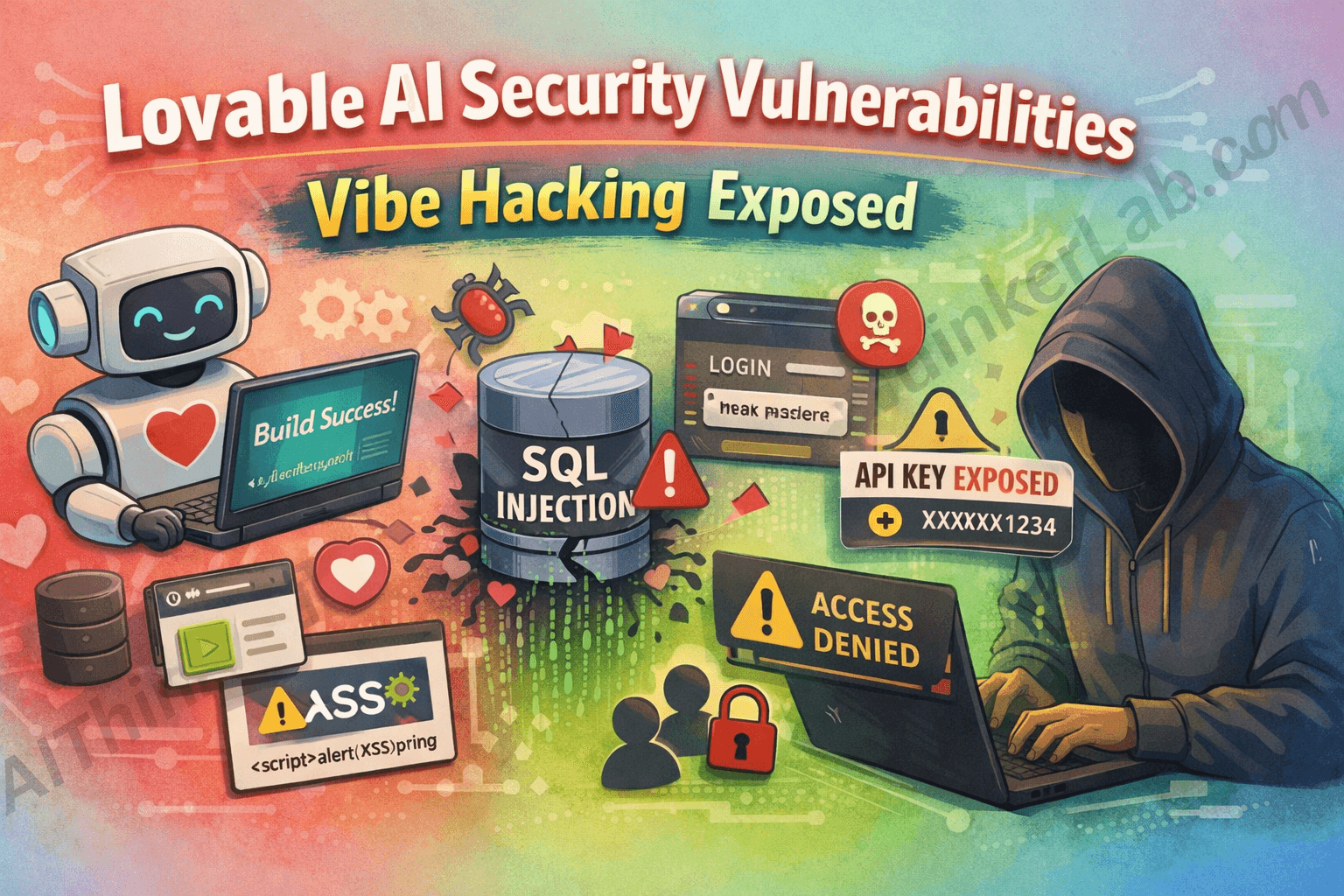

Same thing with APIs - sticking out - yes! Authorization? No! Request limits - fuck that

Come and get user data - €1 per bundle at the market, free for the first person to find it, no registration or SMS required

Seriously, any bot can find anything on the internet in seconds. Then they'll kick it open and poke a hole in it. And the most interesting thing is that you won't even notice. The vast majority of startups currently on the market do not monitor the huge number of requests to their servers, for example, and if someone simply starts scanning available services, you won't even notice it. And believe me, protection against this is much cheaper than investigating a hack later

Next come pieces of configurations and keys from various APIs embedded in the code. It's good that this is stored in a private repository, otherwise you might wake up in the morning and find a reason to tear your hair out, because your AWS/Azure/GCP/etc. (underline as appropriate) suddenly became 800,000,000 instead of the expected 800 euros because someone found your key and had a good time using resources at someone else's expense. Holy shit, free stuff

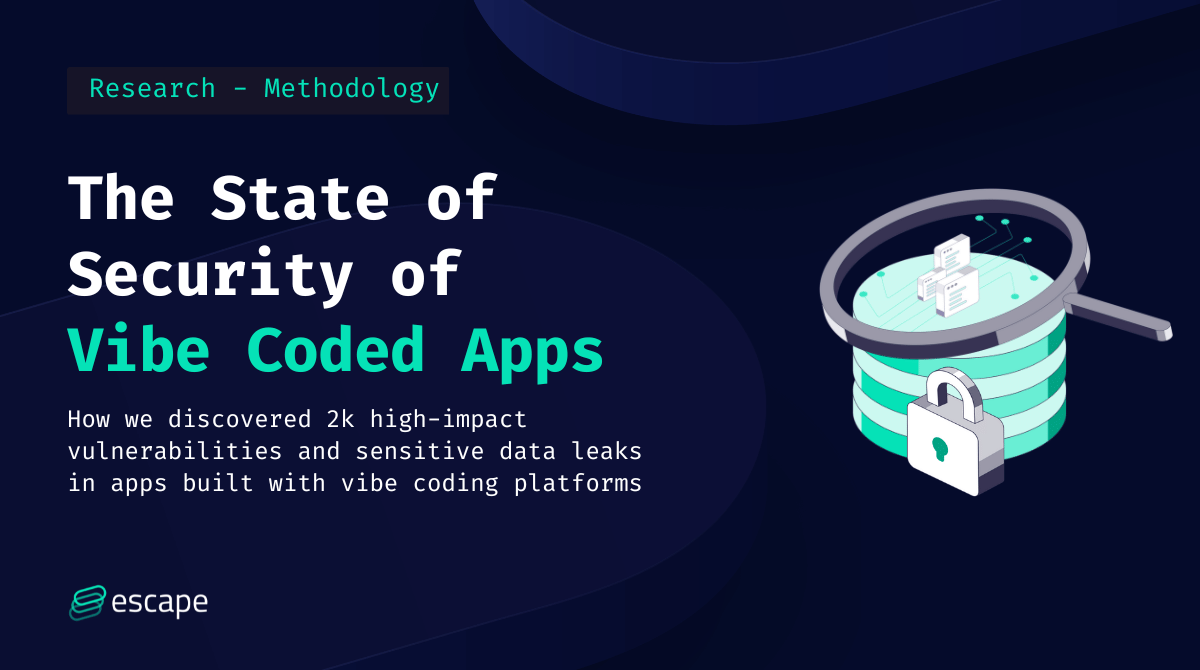

Speaking of the security of the applications themselves - come on, a complete list of everything that is included in the usual AI code - ID substitution in requests? Easy, just change it and get access to someone else's data. It is very rare when validation is tied to such things. What a mess with tokens. It's good if they weren't stored in local storage. A flood of injections, SQL, XSS, just an operating manual. Take it and do it

I guess we should end on a positive note. Despite all the chaos that has ensued, help has come from the creators of the models themselves, who have started adding various features to improve security. But again, AI is a good tool when you clearly understand what you are doing and how you are doing it

The problem is that this mainly undermines the trust of both users and investors in startups. You launched, everything was great, and then you suddenly screwed up. Your data has been leaked. You have betrayed their trust. And everyone who follows you will have difficulty trusting you from the very beginning, because you have already screwed up

Below, I will attach several articles that provide a vivid and accessible overview of modern AI applications

Discussion